Local Transcribe with Whisper

🍎 Apple Silicon GPU/NPU acceleration: This version now supports native Apple GPU/NPU acceleration via MLX Whisper. On Apple Silicon Macs, transcription runs on the Apple GPU and Neural Engine — no CPU fallback needed.

Local Transcribe with Whisper is a user-friendly desktop application that allows you to transcribe audio and video files using the Whisper ASR system, powered by faster-whisper (CTranslate2) on Windows/Linux and MLX Whisper on Apple Silicon. This application provides a graphical user interface (GUI) built with Python and the Tkinter library, making it easy to use even for those not familiar with programming.

New in version 3.0!

- Apple Silicon GPU/NPU support — native MLX backend for Apple Silicon Macs, using Apple GPU + Neural Engine.

- SRT subtitle export — valid SubRip files alongside the existing TXT output, ready for HandBrake or any video player.

- VAD filter — removes silence, reduces hallucination, improves accuracy.

- Word-level timestamps — per-word SRT timing for precise subtitle burning.

- Translation mode — transcribe any language and translate to English in one step.

- Stop button — immediately cancel any transcription, including model downloads.

- Language dropdown — 99 languages with proper ISO codes, no more guessing formats.

- Model descriptions — speed, size, quality stars, and use case shown for every model.

New in version 2.0!

- Switched to faster-whisper — up to 4× faster transcription with lower memory usage, simpler installation.

- Swedish-optimised models — KB-Whisper from the National Library of Sweden (KBLab)

- No separate FFmpeg installation needed — audio decoding is handled by the bundled PyAV library.

- No admin rights required — a plain

pip installcovers everything. - No PyTorch dependency — dramatically smaller install footprint.

- Integrated console - all info in the same application.

tinymodel added — smallest and fastest option.

Features

- Select the folder containing the audio or video files you want to transcribe. Tested with m4a video.

- Choose the language of the files you are transcribing from a dropdown of 99 supported languages, or let the application automatically detect the language.

- Select the Whisper model to use for the transcription. Available models include "tiny", "tiny.en", "base", "base.en", "small", "small.en", "medium", "medium.en", "large-v2", and "large-v3". Models with .en ending are better if you're transcribing English, especially the base and small models.

- Swedish-optimised models — KB-Whisper from the National Library of Sweden (KBLab) is available in all sizes (tiny → large). These models reduce Word Error Rate by up to 47 % compared to OpenAI Whisper on Swedish speech. The language is set to Swedish automatically when a KB model is selected.

- VAD filter — removes silence from audio before transcription, reducing hallucination and improving accuracy.

- Word-level timestamps — generates per-word timing in the SRT output for precise subtitle synchronization.

- Translation mode — transcribes audio in any language and translates the result to English.

- SRT export — valid SubRip subtitle files saved alongside TXT, ready for HandBrake or any video player.

- Monitor the progress of the transcription with the progress bar and terminal.

- Confirmation dialog before starting the transcription to ensure you have selected the correct folder.

- View the transcribed text in a message box once the transcription is completed.

- Stop button — immediately cancel transcription, including model downloads.

Installation

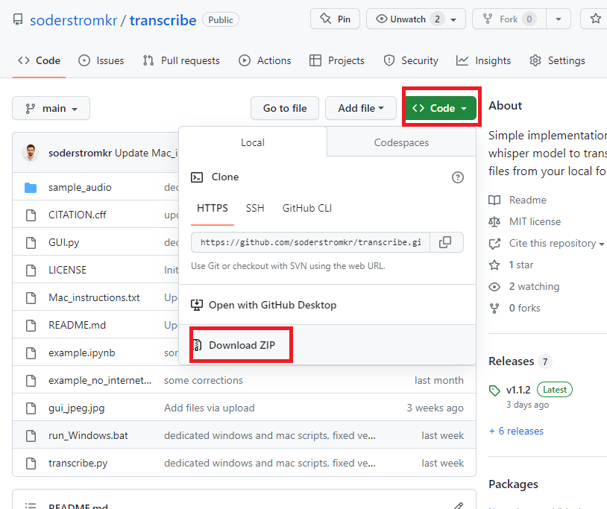

Get the files

Download the zip folder and extract it to your preferred working folder.

Or by cloning the repository with:

Or by cloning the repository with:

git clone https://gitea.kobim.cloud/kobim/whisper-local-transcribe.git

Prerequisites

Install Python 3.10 or later. Some IT policies allow installing from the Microsoft Store or Mac equivalent. However, I would prefer an install from python.org. During installation, check "Add Python to PATH". No administrator rights are needed if you install for your user only.

Run on Windows

Double-click run_Windows.bat — it will auto-install everything on first run.

Run on Mac / Linux

Run ./run_Mac.sh — it will auto-install everything on first run. See Mac instructions for details.

Note: The first run with a given model will download it (~75 MB for base, ~500 MB for medium). After that, everything works offline.

Manual installation (if the launchers don't work)

If run_Windows.bat or run_Mac.sh fails (e.g. Python isn't on PATH, or permissions issues), open a terminal in the project folder and run these steps manually:

python -m venv .venv

Activate the virtual environment:

- Windows:

.venv\Scripts\activate - Mac / Linux:

source .venv/bin/activate

Then install and run:

python install.py

python app.py

GPU Support

Apple Silicon

On Macs with Apple Silicon, the app automatically uses the MLX backend, which runs inference on the Apple GPU and Neural Engine. No additional setup is needed — just install and run. MLX models are downloaded from HuggingFace on first use.

NVIDIA GPUs

This program does support running on NVIDIA GPUs, which can significantly speed up transcription times. faster-whisper uses CTranslate2, which requires NVIDIA CUDA libraries for GPU acceleration.

Automatic Detection

The install.py script automatically detects NVIDIA GPUs and will ask if you want to install GPU support. If you skipped it during installation, you can add it anytime:

pip install nvidia-cublas-cu12 nvidia-cudnn-cu12

Note: Make sure your NVIDIA GPU drivers are up to date. You can check by running nvidia-smi in your terminal. The program will automatically detect and use your GPU if available, otherwise it falls back to CPU.

Verifying GPU Support

After installation, you can verify that your GPU is available by running:

import ctranslate2

print(ctranslate2.get_supported_compute_types("cuda"))

If this returns a list containing "float16", GPU acceleration is working.

Usage

- Launch the app — the built-in console panel at the bottom shows a welcome message and all progress updates. The backend indicator at the bottom shows which inference engine is active (MLX · Apple GPU/NPU, CUDA · GPU, or CPU · int8).

- Select the folder containing the audio or video files you want to transcribe by clicking the "Browse" button next to the "Folder" label. This will open a file dialog where you can navigate to the desired folder. Remember, you won't be choosing individual files but whole folders!

- Select the language from the dropdown — 99 languages are available, or leave it on "Auto-detect". For English-only models (.en) the language is locked to English; for KB Swedish models it's locked to Swedish.

- Choose the Whisper model to use for the transcription from the dropdown list next to the "Model" label. A description below shows speed, size, quality stars, and recommended use case for each model.

- Toggle advanced options if needed: VAD filter, Word-level timestamps, or Translate to English.

- Click the "Transcribe" button to start the transcription. Use the "Stop" button to cancel at any time.

- Monitor progress in the embedded console panel — it shows model loading, per-file progress, and segment timestamps in real time.

- Once the transcription is completed, a message box will appear displaying the result. Click "OK" to close it.

- Transcriptions are saved as both

.txt(human-readable) and.srt(SubRip subtitles) in thetranscriptions/folder within the selected directory. - You can run the application again or quit at any time by clicking the "Quit" button.

Jupyter Notebook

Don't want fancy EXEs or GUIs? Use the function as is. See example for an implementation on Jupyter Notebook.